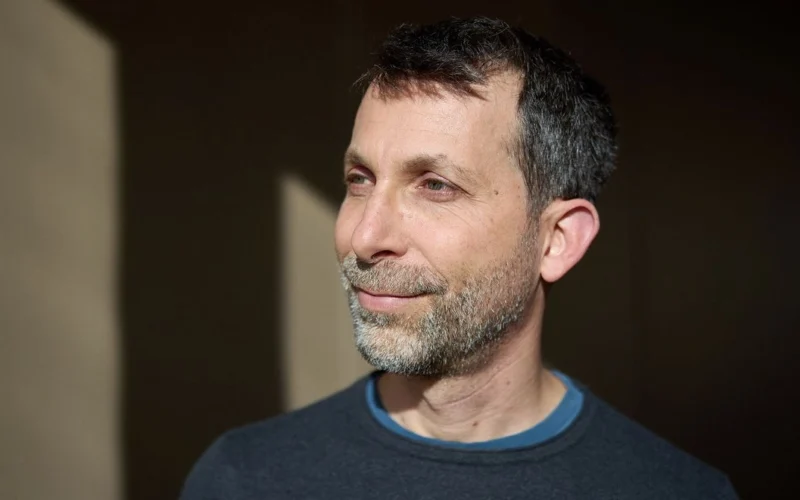

David Silver has emerged as a key figure in the AI landscape, particularly known for developing AlphaGo, the AI that defeated a human champion in the complex game of Go in 2016. Now, he’s launched his own venture, Ineffable Intelligence, with the goal of creating AI systems that can learn and develop independent of human data. This ambition centers around the paradigm of reinforcement learning, where AI models learn through experimentation and self-discovery.

Silver’s vision challenges the conventional methods many AI companies currently employ, which often rely heavily on the vast datasets provided by large-language models (LLMs). In a candid discussion, Silver expresses skepticism about the future of LLM-based approaches, arguing that they simply replicate human intelligence instead of fostering true learning capabilities. "Human data is like a kind of fossil fuel," he states, emphasizing that a self-learning system could serve as a renewable source of intelligence.

Reflecting on the gravity of his mission, he likens it to "making first contact with superintelligence." He envisions these superlearners not just surpassing human intelligence but discovering new scientific or technological advances autonomously. The recent surge in discussions around AI’s potential to outpace human capacity has added urgency to Silver’s work, with Ineffable Intelligence having successfully secured $1.1 billion in funding, thus reaching a remarkable valuation of $5.1 billion.

At the heart of Ineffable Intelligence’s strategy is the creation of AI agents that learn within immersive simulations. While the specifics of these simulations remain undisclosed, the idea is that they will enable AI systems to collaborate, set and achieve goals, and potentially lead to groundbreaking discoveries.

However, this innovative approach is not without its risks. Silver acknowledges the ethical implications that arise when AI systems develop solutions that may not align with human values. To mitigate these concerns, the simulations are designed to allow observation of AI behavior, especially regarding interactions with lesser intelligences. Ravi Mhatre from Lightspeed Ventures, a backer of the startup, believes that Silver’s methodology could lead to safer forms of superintelligence that better align with human values.

Silver’s belief in reinforcement learning as the most promising path to genuine machine intelligence has deep roots in the history of computer science. The entire field has long debated how best to emulate human learning capabilities, with reinforcement learning being a pivotal approach despite the prevailing focus on LLMs today. While the latter have facilitated the development of numerous existing AI systems, Silver argues for a return to foundational learning principles.

As the competition for superintelligence accelerates, characterized by significant investments from major tech firms, Silver’s clarity of vision and collaborative spirit distinguish him within the field. His past collaborations with luminaries like Demis Hassabis, the CEO of Google DeepMind, further enhance his credibility.

Ultimately, Silver posits that the quest for superintelligence transcends technical achievement; it is perhaps the most crucial scientific endeavor of our time, aimed at advancing humanity as a whole.