When Angel, a 24-year-old from Uzbekistan, arrived in Sihanoukville, Cambodia, she eagerly shared her multilingual skills in a recruitment video, showcasing fluency in English, Mandarin, Russian, and Turkish. Unlike traditional job seekers, Angel was vying for a role as an "AI face model," tasked with conducting deepfake video calls to ensnare unsuspecting victims in sophisticated scams.

Her journey is reflective of a larger trend captured in a review by WIRED of numerous Telegram channels, where individuals from various countries—including Turkey, Russia, Ukraine, and several Asian nations—are applying for similarly dubious positions in Cambodia and Southeast Asia. This region has evolved into a vast hub for organized scamming operations, often engaging in human trafficking and forcing victims into online investments and romance scams.

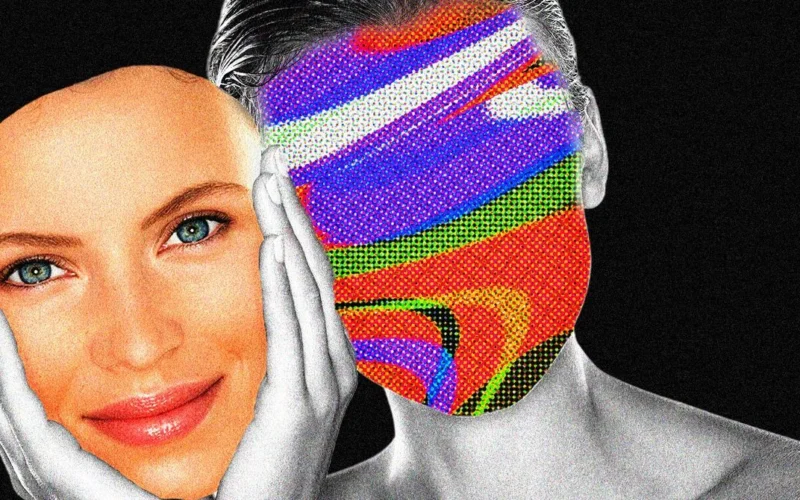

According to Hieu Minh Ngo, a cybercrime investigator, the trend of hiring AI models has surged within these criminal enterprises. They leverage AI technology to manipulate victims through fake personas across messaging platforms. Once a connection is established, scammers employ deepfake technology to create a convincing visual presence during video calls, assuring victims of their authenticity.

Job postings for AI models reflect brutal working conditions, demanding up to 100 video calls a day with minimal time off. Recruitment ads often specify unrealistic requirements or obscure compensation details, further obscuring the true nature of the work. Many applicants are young women seeking financial stability, with some reaching for salaries as high as $7,000 a month, a rate appealing against the backdrop of local economies.

Applicants must produce introductory videos and share personal details, including marital status, which further exposes them to potential exploitation. Despite some applicants being aware of the shady nature of their prospective roles, others, desperate for employment, overlook the signs of danger associated with these positions.

Victims of trafficking within the scam compounds often endure severe exploitation, including physical abuse and harassment. While some who work as AI models may experience slightly better circumstances, reports indicate that poor treatment from superiors is common.

Telegram has not removed the numerous job channels identified by WIRED that promote these roles, despite their clear ties to scamming operations. The platform claims it evaluates content on a case-by-case basis, but the rising prevalence of these jobs underscores a growing issue troubling law enforcement and advocacy groups alike.

As more individuals fall prey to scams via AI technology, the implications stretch far beyond individual cases, highlighting a significant challenge for both authorities and prospective victims navigating the potential dangers lurking within these modern employment opportunities.