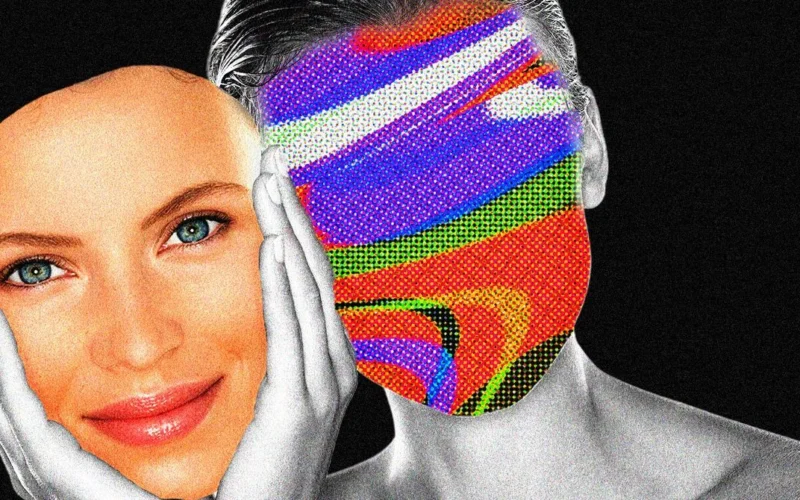

When 24-year-old Angel arrived in Sihanoukville, Cambodia, she boasted impressive language skills in a recruitment video, claiming fluency in English, Chinese, Russian, and Turkish. However, rather than seeking a typical corporate role, Angel was applying for a job as an “AI face model.” This position demands sitting in front of a computer to conduct deepfake video calls aimed at manipulating unsuspecting victims, often as part of elaborate scams.

Angel’s application referenced a year of experience as an AI model. A review of various recruitment postings on Telegram indicates that she is not an outlier. Many individuals, primarily young women from various countries including Turkey and several Asian nations, are pursuing roles as AI or “real face” models in Southeast Asia, which has become notorious for its large-scale scamming operations, including human trafficking.

According to Hieu Minh Ngo, a cybercrime investigator, the push to hire AI models has surged as cybercriminals integrate advanced technologies like face-swapping into their schemes. Fraudsters typically initiate contact with potential victims on social media using fake personas, often utilizing stolen images to draw attention. As interactions evolve, scammers may rely on AI-generated video calls when victims request proof of authenticity.

The job postings for these AI roles are characterized by demanding schedules and limited downtime. For example, one listing noted a requirement for up to 100 video calls each day, with another suggesting as many as 150, all under restrictive working conditions. Applicants are sometimes asked to send daily updates including photos and videos, and some postings controversially include clauses regarding the retention of applicants’ passports, a common tactic used to control victims in scam operations.

Although there are male applicants, the majority of candidates are young women, who often detail previous experience in managing customer interactions or cryptocurrency projects in their applications. These applicants reportedly seek compensation that can reach up to $7,000 monthly, with demands for specific living conditions that may be more favorable than those faced by trafficked individuals; however, they still risk encountering abusive environments.

As some of these models experience varied degrees of autonomy, reports have surfaced of severe mistreatment and harassment within the compounds where they work. Even those who are there voluntarily may face pressure from their employers, underscoring the industry’s troubling dynamics.

WIRED’s investigation also revealed job postings containing red flags commonly associated with scams, such as mentions of “clients” (a term used to refer to victims) alongside references to cryptocurrency and investment schemes.

Frank McKenna, an anti-fraud strategist, recounted interactions with alleged AI models, where he suspected filters were being used to obscure their appearances during video calls. He discovered these models often rotate between contracts and job opportunities within these scam networks, further illustrating a troubling aspect of this modern criminal ecosystem.

For further insight into scams involving AI modeling, you can explore Humanity Research Consultancy and the implications of technology on fraud operations.