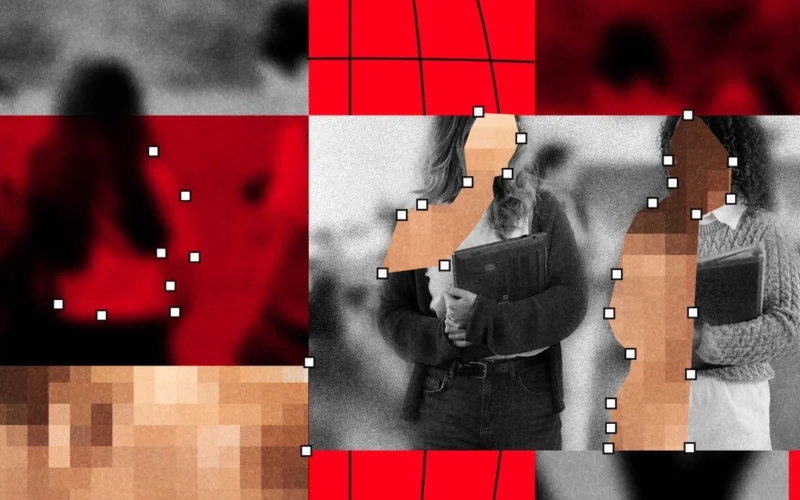

The escalating issue of deepfake imagery in schools, particularly involving AI-generated nude images, has reached alarming proportions. A recent analysis from WIRED and Indicator reveals that nearly 90 schools worldwide have been affected, impacting over 600 students. This crisis has significantly grown as the technology that enables creating these explicit images has become more accessible.

The unfortunate trend often begins with teenage boys downloading photos of their female classmates from social media platforms like Instagram or Snapchat. Utilizing harmful "nudify" apps, they generate fake nude images or videos and circulate them around their school. This not only humiliates and violates victims, but also instills a sense of hopelessness and fear that these images will follow them indefinitely.

Since 2023, incidents of deepfake sexual abuse have been reported in at least 28 countries, with most assailants being high school boys. These images, which include minors, are categorized as child sexual abuse material (CSAM). This analysis is notable as it may be the first to provide a comprehensive look at real-world cases of AI-related deepfake abuse in educational contexts.

In North America alone, nearly 30 incidents of deepfake sexual abuse have been registered since 2023, with some cases involving over 60 alleged victims and instances of students being expelled. Reports have surfaced from South America, Europe, and Australia, indicating a global problem.

The true number of victims is likely far greater. UNICEF estimates around 1.2 million children had sexual deepfakes created of them last year. Surveys show significant proportions of young people in various countries, including one in five in Spain, report being victims of sexualized deepfakes.

Experts highlight a worrying trend where victims feel immense distress and avoid attending school out of fear of facing those who have created these explicit images. Legal representatives for victims describe severe emotional trauma, detailing how some girls have experienced profound distress over the irreversible nature of their circumstances.

School responses have varied, with some institutions opting to prevent publishing student images on social media or in yearbooks due to the risk of deepfake abuse. In contrast, many schools are still unprepared to deal with the ramifications of these incidents, often falling short in their responses to alleviate victims’ suffering.

Legal repercussions for offenders vary; some face charges related to CSAM, while others encounter different disciplinary actions. In March, two Pennsylvania students were sentenced to community service for creating deepfake images of multiple girls.

Activism against these practices is rising among students, with many advocating for victims and pushing for legislative changes. Recent movements have led to the passing of laws requiring technology platforms to remove nonconsensual intimate images within 48 hours.

Despite mounting awareness and advocacy, many schools and law enforcement agencies remain ill-equipped to handle the increase in deepfake incidents, leading to significant emotional and psychological impacts on victims. This highlights an urgent need for comprehensive training and resources to educate students and staff about digital safety and the legal implications of creating and sharing such content.